Posts in category Maple TA

Doing more cool stuff with Maple TA without being a Maple Expert

Hybrid Assessment: Combining paperbased and digital questions in MapleTA

In August 2016 we upgraded to Maple TA version 2016. A moment I had been looking forward to, since it carried some very promising new features regarding essay questions, improved manual online grading and a new question type I requested: Scanned Documents.

Some teachers would like to have students make a sketch or show their steps in a complex calculation. Because these actions are hard to perform with regular computer room hardware (mouse and keyboard only) it would be nice to keep those actions on paper, but how to cleverly connect this paper to the gradebook. In my blogpost from may 2013 I mentioned an example of a connecting feature from ExamOnline that is now implemented in Maple TA.

Last friday we had the first exam that actually applied this feature in an exam: about a hundred students had to mark certain area’s in an image of the brain on paper. The papers were collected, scanned and as a batch uploaded to MapleTA’s gradebook. The exam reviewers found the scanned papers in the gradebook and graded the response manually.

How does this work?

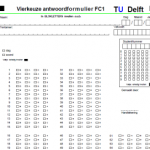

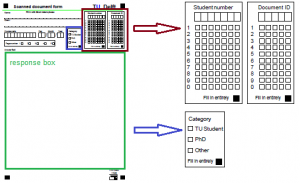

First, in order to use the batch upload feature, you need to create a paper form that can be recognized in the scanning process. Our basis was the ‘Sonate’-form (see image 1). Sonate is our multiple choice test system for item- and test analysis. This form has certain markings that can be recognized by the scan software, Teleform.

This form was converted into a ‘Maple TA form’ (see image 2). The form was adjusted by creating a response box (in green), an entry for student and Document ID (in brown) and a user category (in blue). The document ID is created by MapleTA. It is a unique numerical code per student in the test.

In the scan software a script is written that creates a zip-file containing all the scanned documents and a CSV-file that tells MapleTA what documents are in the zip. As soon as the files are created and saved, automatically an e-mail is sent to support. The support desk uploads the files to MapleTA and notifies the reviewers that all is set.

In the gradebook a button is shown. When you click it, it will open the pdf file so the reviewer can grade the student’s response.

In the MapleTA help, this process is described in detail. Here you also find how to manually upload the files to the gradebook. Maplesoft extended this feature by also allowing students to upload their own ‘attachment’ this can be any of the following file types:

This feature is very promising for our online students. When using online proctoring at the moment they use e-mail to send attachments. Now they might use MapleTA . As long as the file type is allowed.

MapleTA and Mobius User Summit 2016 in Vienna

Last week I attended Maplesofts MapleTA and Mobius User Summit 2016 in Vienna. Those were two and a half days well spend. This was already the third one after Amsterdam (2014) and New York (2015). And is good to meet the same people from the other summits, but also good to see new faces as MapleTA becomes more popular in Europe.

For me the summit started by attending two training sessions, or should I say: demo sessions. The first one was about Advanced Question Creation in MapleTA. Jonathan zoomed in on maple graded questions. Since I recently set my first steps in maple graded (see my blog post doing cool stuff..) I welcomed it very much. He addressed some differences between coding in Maple and MapleTA. Providing us with useful tips how to get around them (involving the general solution using the ‘convert’- procedure).

I got quite jealous as he said that at Birmingham they created grading scripts they can call upon in the ‘grading code field’ so creating a maple graded question is a lot easier. Unfortunately these scripts cannot easily be transferred to other installations of MapleTA. The good news: He said he is talking to Maplesoft about integrating them in the software. Let’s hope it gets picked up. Jonathan also referred to the MapleTA Community were any user can pose questions or respond to others problems. It is active though still in beta.

The second demo was on creating lessons and a slide show Mobius by Aaron. Although we at Delft had done some pilot projects at the beginning of the year, I was pleasantly surprised by the improvements of some features. The software is not officially released just yet and the first version will not have all of the features Jim sketched us during the Mobius Roadshow in September. It holds quite a promise. At TU Delft will continue piloting the software.

The conference on thursday and friday contained several user presentations covering the themes of the conference:

- Shaping Curriculum

- Content Creation (with Mobius)

- User Experience Mobius

- Integrating with your Technology

- The future of Online Education

Eight different institutions presented their implementation, course examples, their success stories and troubles. Most interesting though, were the moments between the different themes, when there was plenty of time to talk with the other participants: elaborating on their presentation or just getting to know each other. But also the opportunity to talk to Maplesoft people addressing some issues and near future developments was very valuable.

On friday Steve Furino (University of Waterloo) had an interactive session on what the participants top 3 of future developments should be. This resulted in a list of about 30 items (that did contain multiple entries on the same topic. Usability, regrading and universal coding across Maple and MapleTA were top 3.

Especially Mobius initiated a lot of request from fellow participants to work together creating materials and exchanging them. We saw some lovely examples from Waterloo: chemistry for engineers, precalculus and computer science. Feel free to click those links and check out the courses. Waterloo is thinking of setting up a Workshop focused on developing online STEM Courses (using Mobius). The idea is that participants will leave with a completed online STEM course that they can implement immediately. This workshop should last somewhere between 2 and 4 weeks on site (Waterloo, Canada). If you would like to participate in their viability evaluation follow the link. To sounds like a great idea to me, but wonder wether being away for 4 weeks would be a problem for most people that are interested.

I left the conference full of ideas and a personal action list. Already looking forward to the next User Summit (probably in London – no date set yet). I hope to have made a lot of progress on my plans by then. So I’ll have interesting experiences to share.

Doing cool stuff with MapleTA without being a Maple expert

I have been using MapleTA for a couple of years now and so far I did not use the Maple graded question type that much. I did not feel the need and frankly I thought that my knowledge of the underlying Maple engine would be insufficient to really do a good job. But working closely with a teacher on digitizing his written exam taught me that you don’t have to be an expert user in Maple in order to create great (exam) questions in MapleTA.

A couple of months ago we started a small pilot: A teacher in Materials Science, an assessment expert and me (being the MapleTA expert) were going to recreate a previously taken, written exam into a digital format. Based on the learning goals of the course and the test blue print we wanted to create a genuine digital exam, that would take an optimal benefit of being digital and not just an online version of the same questions. The questions should reflect the skills of the students and not just assess them on the numerical values they put into the response fields.

Being so closely involved in the process and together finding essential steps and skills students need to show, really challenged me to dive into the system and come up with creative solutions mostly using the adaptive question type and maple graded questions.

Adaptive Question Type – Scenarios

The adaptive question type allowed us to create different scenario’s within a question:

- A multiple choice question was expanded into a multiple choice question (the main question) with some automatically graded underpinning questions. Students needed to answer all questions correctly in order to get the full score. Missing one question resulted in zero points. Answering the main question correctly would take the student to a set of underpinning questions. Failing on the main question, would dismiss the student from the underpinning questions and take them directly to the next question in the assignment (not having to waste time on the underpinning questions, since they needed to answer all questions correctly)

This way the chance of guessing the right answer was eliminated, resulting in not having to compensate for guessing in the overall test score. The problem itself was posed to the students in a more authentic way: Here is the situation, what steps do you need to take to get to the right solution. Posing that main question first requires the students to think about the route, without guiding them along a certain path. - A numerical question was also expanded into a main-sub question scenario. This time, when students would answer the main question correctly (requiring to find their own strategy to work towards the solution) they are taken to the next question since they’ve shown they succeeded in solving the problem. Those who did not get to the correct answer, were given a couple of additional questions, to see at what point they went wrong and thus being able to solve the problem with a little help. Still being able to gain a partial score on the main question.

- Another adaptive question scenario would require all students to go through all the main-sub questions. Using the sub questions either to underpin the main question or as a help towards the right solution by presenting the steps that need to be taken. Again allowing students to show more of their work and thoughts than a plain set of questions, without guiding students to much on ‘how to’ solve the problem.

So far the adaptive questions, but what about the maple graded?

Maple graded – formula evaluation and Preview

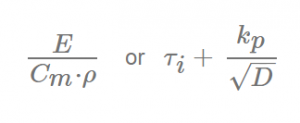

The exam required students to type in a lot of (non mathematical) formulas used to compute values for material properties or deriving a materials index to select the material that best performs under particular circumstances.

For example:

Maple can evaluate these type of questions quite well, since the order of different terms will not influence the outcome of the evaluation. We first tried the symbolic entry mode, but try-out sessions with students turned out that the text entry mode was to be preferred. Since it would speed up entry of the response.

Then we discovered the power of the preview button. Not only did it warn students for misspelled greek characters (e.g. lamda in stead of lambda), misplaced brackets or incorrect Maple syntax, it also allowed us to provide feedback saying that certain parameters in their response should be elaborated. This could be done by defining a Custom Previewing code, like:

if evalb(StringTools[CountCharacterOccurrences]("$RESPONSE","A")=1)

then printf("Elaborate the term A for Surface Area. ")

else printf(MathML[ExportPresentation]($RESPONSE));

end if;

This turned out to be a powerful way to reassure students their response would not be graded incorrect through syntax mistakes or not elaborating their response to the right level.

Point of attention: you need to make sure that the grading code takes into account that students might be sloppy in their use of upper and lower case characters and tend to skip subscripts if that seems insignificant to them. We used the algorithm to prepare accepted notations, writing OR statements in the grading code:

Algorithm:

$MatIndex1=maple("sigma[y]/rho/C[m]");

$MatIndex2=maple("sigma[y]/rho/c[m]");

Grading Code:

evalb(($MatIndex1)-($RESPONSE)=0) or evalb(($MatIndex2)-($RESPONSE)=0);

Through the MapleCommunity I received an alternative to compensate for the case sensitivity:

Algorithm:

$MatIndex1= "sigma[y]/rho/C[m]";

Grading Code:

evalb(parse(StringTools:-LowerCase("$RESPONSE"))=parse(StringTools:-LowerCase("$MatIndex1")));

Automatically graded ‘key-word’ questions in an adaptive section

In order to use adaptive sections in a question all questions must be graded automatically. This means you cannot pose an essay question, since it requires manual grading. We had some questions were we wanted the students to explain their choice by writing a motivation in text. Both the response to the main question and the motivation had to be correct in order to get full score. No partial grading allowed.

Having a prognosis of nearly 600 students taking this exam, the teacher obviously did not want to grade all these motivations by hand. An option would have been to use the ‘keyword’ question type that is presented in the MapleTA demo class, but since it is not a standard question type, it could not be part of an (adaptive) question designer type question. The previously mentioned Custom Preview Code inspired me to use a Maple graded question instead. Going through Metha Kamminga’s manual I found the right syntax to search for a specific string in a response text and grade the response automatically. Resulting in the following grading code:

evalb(StringTools[Search]("isolator","$RESPONSE")>=1) or

evalb(StringTools[Search]("insulator","$RESPONSE")>=1);

Naturally, the teacher should also review the responses that did not contain these key words, but certainly that would mean only having to grade a portion of the 600 student responses, since all the automatically-graded-and-found-to-be-correct would no longer need grading. Thus saving a considerable amount of time.

Preliminary conclusions of our pilot

MapleTA offers a lot of possibilities that require little or no knowledge of the Maple engine, but

- It requires careful thinking and anticipation on student behavior.

- Make sure to have your students practice the necessary notations, so they are more confident and familiar with MapleTA’s syntax or ‘whims’ as students tend to call it.

- Take your time before the exam to define the alternatives and check in detail what is excepted and what is not. This saves you a lot of time afterwards. Because unfortunately making corrections in the gradebook of MapleTA is devious, time consuming and not user friendly at all.