Posts in category ICTO

Doing more cool stuff with Maple TA without being a Maple Expert

Hybrid Assessment: Combining paperbased and digital questions in MapleTA

In August 2016 we upgraded to Maple TA version 2016. A moment I had been looking forward to, since it carried some very promising new features regarding essay questions, improved manual online grading and a new question type I requested: Scanned Documents.

Some teachers would like to have students make a sketch or show their steps in a complex calculation. Because these actions are hard to perform with regular computer room hardware (mouse and keyboard only) it would be nice to keep those actions on paper, but how to cleverly connect this paper to the gradebook. In my blogpost from may 2013 I mentioned an example of a connecting feature from ExamOnline that is now implemented in Maple TA.

Last friday we had the first exam that actually applied this feature in an exam: about a hundred students had to mark certain area’s in an image of the brain on paper. The papers were collected, scanned and as a batch uploaded to MapleTA’s gradebook. The exam reviewers found the scanned papers in the gradebook and graded the response manually.

How does this work?

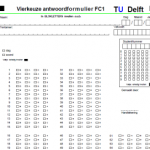

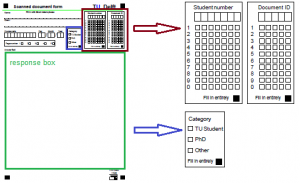

First, in order to use the batch upload feature, you need to create a paper form that can be recognized in the scanning process. Our basis was the ‘Sonate’-form (see image 1). Sonate is our multiple choice test system for item- and test analysis. This form has certain markings that can be recognized by the scan software, Teleform.

This form was converted into a ‘Maple TA form’ (see image 2). The form was adjusted by creating a response box (in green), an entry for student and Document ID (in brown) and a user category (in blue). The document ID is created by MapleTA. It is a unique numerical code per student in the test.

In the scan software a script is written that creates a zip-file containing all the scanned documents and a CSV-file that tells MapleTA what documents are in the zip. As soon as the files are created and saved, automatically an e-mail is sent to support. The support desk uploads the files to MapleTA and notifies the reviewers that all is set.

In the gradebook a button is shown. When you click it, it will open the pdf file so the reviewer can grade the student’s response.

In the MapleTA help, this process is described in detail. Here you also find how to manually upload the files to the gradebook. Maplesoft extended this feature by also allowing students to upload their own ‘attachment’ this can be any of the following file types:

This feature is very promising for our online students. When using online proctoring at the moment they use e-mail to send attachments. Now they might use MapleTA . As long as the file type is allowed.

MapleTA and Mobius User Summit 2016 in Vienna

Last week I attended Maplesofts MapleTA and Mobius User Summit 2016 in Vienna. Those were two and a half days well spend. This was already the third one after Amsterdam (2014) and New York (2015). And is good to meet the same people from the other summits, but also good to see new faces as MapleTA becomes more popular in Europe.

For me the summit started by attending two training sessions, or should I say: demo sessions. The first one was about Advanced Question Creation in MapleTA. Jonathan zoomed in on maple graded questions. Since I recently set my first steps in maple graded (see my blog post doing cool stuff..) I welcomed it very much. He addressed some differences between coding in Maple and MapleTA. Providing us with useful tips how to get around them (involving the general solution using the ‘convert’- procedure).

I got quite jealous as he said that at Birmingham they created grading scripts they can call upon in the ‘grading code field’ so creating a maple graded question is a lot easier. Unfortunately these scripts cannot easily be transferred to other installations of MapleTA. The good news: He said he is talking to Maplesoft about integrating them in the software. Let’s hope it gets picked up. Jonathan also referred to the MapleTA Community were any user can pose questions or respond to others problems. It is active though still in beta.

The second demo was on creating lessons and a slide show Mobius by Aaron. Although we at Delft had done some pilot projects at the beginning of the year, I was pleasantly surprised by the improvements of some features. The software is not officially released just yet and the first version will not have all of the features Jim sketched us during the Mobius Roadshow in September. It holds quite a promise. At TU Delft will continue piloting the software.

The conference on thursday and friday contained several user presentations covering the themes of the conference:

- Shaping Curriculum

- Content Creation (with Mobius)

- User Experience Mobius

- Integrating with your Technology

- The future of Online Education

Eight different institutions presented their implementation, course examples, their success stories and troubles. Most interesting though, were the moments between the different themes, when there was plenty of time to talk with the other participants: elaborating on their presentation or just getting to know each other. But also the opportunity to talk to Maplesoft people addressing some issues and near future developments was very valuable.

On friday Steve Furino (University of Waterloo) had an interactive session on what the participants top 3 of future developments should be. This resulted in a list of about 30 items (that did contain multiple entries on the same topic. Usability, regrading and universal coding across Maple and MapleTA were top 3.

Especially Mobius initiated a lot of request from fellow participants to work together creating materials and exchanging them. We saw some lovely examples from Waterloo: chemistry for engineers, precalculus and computer science. Feel free to click those links and check out the courses. Waterloo is thinking of setting up a Workshop focused on developing online STEM Courses (using Mobius). The idea is that participants will leave with a completed online STEM course that they can implement immediately. This workshop should last somewhere between 2 and 4 weeks on site (Waterloo, Canada). If you would like to participate in their viability evaluation follow the link. To sounds like a great idea to me, but wonder wether being away for 4 weeks would be a problem for most people that are interested.

I left the conference full of ideas and a personal action list. Already looking forward to the next User Summit (probably in London – no date set yet). I hope to have made a lot of progress on my plans by then. So I’ll have interesting experiences to share.

Doing cool stuff with MapleTA without being a Maple expert

I have been using MapleTA for a couple of years now and so far I did not use the Maple graded question type that much. I did not feel the need and frankly I thought that my knowledge of the underlying Maple engine would be insufficient to really do a good job. But working closely with a teacher on digitizing his written exam taught me that you don’t have to be an expert user in Maple in order to create great (exam) questions in MapleTA.

A couple of months ago we started a small pilot: A teacher in Materials Science, an assessment expert and me (being the MapleTA expert) were going to recreate a previously taken, written exam into a digital format. Based on the learning goals of the course and the test blue print we wanted to create a genuine digital exam, that would take an optimal benefit of being digital and not just an online version of the same questions. The questions should reflect the skills of the students and not just assess them on the numerical values they put into the response fields.

Being so closely involved in the process and together finding essential steps and skills students need to show, really challenged me to dive into the system and come up with creative solutions mostly using the adaptive question type and maple graded questions.

Adaptive Question Type – Scenarios

The adaptive question type allowed us to create different scenario’s within a question:

- A multiple choice question was expanded into a multiple choice question (the main question) with some automatically graded underpinning questions. Students needed to answer all questions correctly in order to get the full score. Missing one question resulted in zero points. Answering the main question correctly would take the student to a set of underpinning questions. Failing on the main question, would dismiss the student from the underpinning questions and take them directly to the next question in the assignment (not having to waste time on the underpinning questions, since they needed to answer all questions correctly)

This way the chance of guessing the right answer was eliminated, resulting in not having to compensate for guessing in the overall test score. The problem itself was posed to the students in a more authentic way: Here is the situation, what steps do you need to take to get to the right solution. Posing that main question first requires the students to think about the route, without guiding them along a certain path. - A numerical question was also expanded into a main-sub question scenario. This time, when students would answer the main question correctly (requiring to find their own strategy to work towards the solution) they are taken to the next question since they’ve shown they succeeded in solving the problem. Those who did not get to the correct answer, were given a couple of additional questions, to see at what point they went wrong and thus being able to solve the problem with a little help. Still being able to gain a partial score on the main question.

- Another adaptive question scenario would require all students to go through all the main-sub questions. Using the sub questions either to underpin the main question or as a help towards the right solution by presenting the steps that need to be taken. Again allowing students to show more of their work and thoughts than a plain set of questions, without guiding students to much on ‘how to’ solve the problem.

So far the adaptive questions, but what about the maple graded?

Maple graded – formula evaluation and Preview

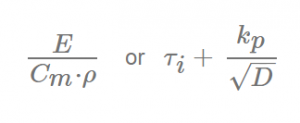

The exam required students to type in a lot of (non mathematical) formulas used to compute values for material properties or deriving a materials index to select the material that best performs under particular circumstances.

For example:

Maple can evaluate these type of questions quite well, since the order of different terms will not influence the outcome of the evaluation. We first tried the symbolic entry mode, but try-out sessions with students turned out that the text entry mode was to be preferred. Since it would speed up entry of the response.

Then we discovered the power of the preview button. Not only did it warn students for misspelled greek characters (e.g. lamda in stead of lambda), misplaced brackets or incorrect Maple syntax, it also allowed us to provide feedback saying that certain parameters in their response should be elaborated. This could be done by defining a Custom Previewing code, like:

if evalb(StringTools[CountCharacterOccurrences]("$RESPONSE","A")=1)

then printf("Elaborate the term A for Surface Area. ")

else printf(MathML[ExportPresentation]($RESPONSE));

end if;

This turned out to be a powerful way to reassure students their response would not be graded incorrect through syntax mistakes or not elaborating their response to the right level.

Point of attention: you need to make sure that the grading code takes into account that students might be sloppy in their use of upper and lower case characters and tend to skip subscripts if that seems insignificant to them. We used the algorithm to prepare accepted notations, writing OR statements in the grading code:

Algorithm:

$MatIndex1=maple("sigma[y]/rho/C[m]");

$MatIndex2=maple("sigma[y]/rho/c[m]");

Grading Code:

evalb(($MatIndex1)-($RESPONSE)=0) or evalb(($MatIndex2)-($RESPONSE)=0);

Through the MapleCommunity I received an alternative to compensate for the case sensitivity:

Algorithm:

$MatIndex1= "sigma[y]/rho/C[m]";

Grading Code:

evalb(parse(StringTools:-LowerCase("$RESPONSE"))=parse(StringTools:-LowerCase("$MatIndex1")));

Automatically graded ‘key-word’ questions in an adaptive section

In order to use adaptive sections in a question all questions must be graded automatically. This means you cannot pose an essay question, since it requires manual grading. We had some questions were we wanted the students to explain their choice by writing a motivation in text. Both the response to the main question and the motivation had to be correct in order to get full score. No partial grading allowed.

Having a prognosis of nearly 600 students taking this exam, the teacher obviously did not want to grade all these motivations by hand. An option would have been to use the ‘keyword’ question type that is presented in the MapleTA demo class, but since it is not a standard question type, it could not be part of an (adaptive) question designer type question. The previously mentioned Custom Preview Code inspired me to use a Maple graded question instead. Going through Metha Kamminga’s manual I found the right syntax to search for a specific string in a response text and grade the response automatically. Resulting in the following grading code:

evalb(StringTools[Search]("isolator","$RESPONSE")>=1) or

evalb(StringTools[Search]("insulator","$RESPONSE")>=1);

Naturally, the teacher should also review the responses that did not contain these key words, but certainly that would mean only having to grade a portion of the 600 student responses, since all the automatically-graded-and-found-to-be-correct would no longer need grading. Thus saving a considerable amount of time.

Preliminary conclusions of our pilot

MapleTA offers a lot of possibilities that require little or no knowledge of the Maple engine, but

- It requires careful thinking and anticipation on student behavior.

- Make sure to have your students practice the necessary notations, so they are more confident and familiar with MapleTA’s syntax or ‘whims’ as students tend to call it.

- Take your time before the exam to define the alternatives and check in detail what is excepted and what is not. This saves you a lot of time afterwards. Because unfortunately making corrections in the gradebook of MapleTA is devious, time consuming and not user friendly at all.

Opzet gebruikersgroep voor Maple T.A.

Als TU Delft hebben we het initiatief genomen om een gebruikersgroep te gaan vormen voor MapleT.A.

In het hoger onderwijs wordt Maple T.A. op vele plekken ingezet. Maple T.A. krijgt met iedere versie uitgebreidere mogelijkheden om digitaal toetsen naar een hoger plan te brengen. Om deze mogelijkheden beter te benutten en de her- en der opgedane Maple T.A. kennis en ervaring niet verloren te laten gaan maar juist te delen en te borgen, willen we een gebruikersgroep starten. Met onderstaande enquête willen we graag de behoefte onder Maple T.A. gebruikers peilen m.b.t. deelname aan een gebruikersgroep.

Binnen een paar dagen zijn al bijna 20 reacties binnengekomen vanuit diverse instellingen, dus we zullen zeker gaan starten.

Heb je de enquête gemist, maar ben je wel geinteresseerd, vul m dan alsnog in op: http://goo.gl/forms/4rYF8B41Pt

ATP Conference 2016 – two interesting applications

During my visit at ATP-conference last week, two applications captured my interest: Learnosity and Metacog.

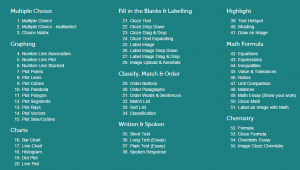

Learnosity is an assessment system that, in my humble opinion, takes Technology Enhanced Item types (TEI’s) to the next level. With 55 different templates for questions, it might be hard to choose which one best suites the situation, but it at least extends beyond the TEI’s most speakers mentioned (drag & drop, hotspot, multiple response and video).

Learnosity can handle (simple) open math and open chemistry questions. That can be automatically graded. Nowhere near the extend that Maple T.A. can, but sure enough usable in lots of situations. It can create automatically graded charts (bar, line and point), graphs (linear, parabolic and goniometric) and has handwriting recognition. Interesting features that should come available in many more applications.

It must be said that handwriting recognition sounds nice, but as long as we have no digital slates in our exam room it can not be appreciated to the full extent (try writing with your mouse and you’ll understand what I mean). But being able to drag a digital protractor or a ruler onto the screen to measure angles or distances is really cool.

For me the most interesting part of this assessment system was the user friendliness. I need to dig deeper to see what we can learn from this system, but it sure is interesting.

Metacog is an interesting application, since it captures the process on getting to the final answer. You can run it while the student is working on a problem. It captures those activities that you define and afterwards you can have the system generate a report on these activities. Participation, behaviour, time on task and sequence of events are just a few of the reports that can be made. This is a very interesting tool to gain learning analytic data. And the system can be integrated onto any online learning activity. Here’s a video that can explain Metacog better.

[vimeo]https://vimeo.com/148498871[/vimeo]

ATP Conference 2016 – A whole different ballgame, so what to learn from it?

This past week me and my test expert-colleague went to the ATP conference 2016 in Florida. ATP stands for the Association of Test Publishers. In the US national tests are everywhere. For each type of education: K12, middle school, high school, college and especially professional education tests are developed to be used nationwide. The crowd at the conference was filled up with companies that develop and deploy tests for school districts or the professional field (health care, legal, navy, military, etc.). I soon found out that these people play major league in test developing, usability studies and item analysis, while we at our university should be lucky if we find ourselves in little league.

Fair is fair: the amount of students taking exams at the university will never come near the amount of test takers they develop for. Reuse of the items developed in our situation is only in some cases a requirement, in many of our cases though reuse is not desirable. And their means: man power, time and money spent is impossible to meet. So what to learn from the Professionals?

Our special interest for this conference were the sessions on Technically Enhanced Item types (TEI’s). Since we are conducting some research into the use of certain test form scenarios, that include the use of TEI’s and constructed response items. For me, the learning points are more on the level of the usability studies, these companies do.

Apparently, the majority of the tests created by these test publishers, consist of multiple choice questions (the so called bubble sheet tests) and test developers overseas are starting to look towards TEI’s for more authentic testing. So TEI’s were a hot topic. In my first session I was kind of surprised that all question types other than multiple choice were considered to be TEI’s. Especially Drag & Drop items were quite popular. I did not think the use of this kind of question types would cause much problems for test takers, but apparently I was wrong.

Usability of these drag & drop items turned out to be much more complicated than I thought: Is it clear where students would need to drop their response? Will near placement of the response cause the student to fail or not: What if the response is partly within and partly outside the (for the student invisible) designated area? How accurate do they need to be? When to use the hotspot and when to drag an item onto an image? Do our students encounter the same kind of insecurities as the American test takers? Or are they more savvy on this matter. We do experience that students make mistakes in handling our ‘adaptive question’-type and since we are introducing different scenarios, I am aware even more than before, that we should be more clear to our students what to expect. We got a better idea what to expect when conducting our usability study on ‘adaptive questions’ and don’t assume that taking a test with TEI’s is as easy as it seems.

This conference made me aware that we need to make an effort in teaching our instructors and support workers about the principles of usability and be more strict about the application of these principles. This fits perfectly into our research project deliverables and the online instruction modules were are about to set up.

MapleTA – developments over the summer

The past couple of months digital examination in Maple TA has made a giant leap forwards. The server is upgraded to accommodate 750 students taking an exam concurrently. The security level has significantly improved and tested and we have been able to create two large exam rooms containing respectively 225 and 250 seats. During the first week of October first year students in Mechanical Engineering and Maritime Engineering inaugurated the new setting, taking mid terms each day.

Momentum

At the end of June the board of education directors requested a pilot to show whether it would be possible to scale the MapleTA exam system to 700 concurrent students. This boosted the existing project ‘digital testing campus wide’ towards ‘a grand finale’. All the necessary disciplines, Education and Student Affairs (Scheduling Affairs), Facility Management, Teaching Staff, IT operations and IT development got together to discuss the whole process of digital testing and find solutions to the defined problems.

Exam rooms

The former PMB hall in IO was transformed into 3 concatenated rooms with a total of 225 seats and exam room Drebbelweg 2 was chosen to be converted into a computer exam room with 250 seats. PMB hall has pc’s that can be used for both education and exams. During an exam period the pc rooms are not available for other activities, so IT can guarantee the hardware is checked and repaired if necessary. Drebbelweg 2 is equipped with laptops and an upgrade of the wireless network. This exam room will be converted to a digital exam room during exam periods.

These exam rooms are available for all faculties.

Security

A complete new secure environment was designed, tested and implemented. The exam server can only be reached through a secure connection, https and is only available from within the campus network. This secure layer prevents students from using internet, home directories or other applications, students can only login to the computer with the exam account and the exam can only be made from the exam room.

At the moment it is only possible to run MapleTA in this secure environment. We plan to add applications to this environment so they can be used within this secure environment either with or without MapleTA.

Inauguration

After summer long working hard to get everything in place. Monday, 1 October, at nine in the morning, the first group of 460 3mE students took a test in Calculus. Though there were some problems getting started, the exam session ran quite smooth. Establishing that the concept worked. On tuesday and wednesday some server capacity issues became apparent. Causing up to 15% of the students to turn to a paper copy. Fortunately the last two exams ran smoothly.

Curious?

If you would like to know more about the new situation or digital testing in general, don’t hesitate to contact me.

Beveiligd toetsen met Blackboard (2)

Een onderdeel van de beveiliging van het digitale tentamen is dat men zeker wil weten dat het tentamen vanuit de tentamenzaal is gemaakt. Nu zal dit binnen de techniek best te regelen te zijn, maar volgens mij kan het ook ‘the old fashioned way’. Door het tentamen alleen beschikbaar te maken voor degenen die zich daadwerkelijk in de zaal bevinden. Wanneer er verschillende sessies zijn van hetzelfde tentamen kan op deze manier het tentamen per sessie alleen voor de aanwezigen bij de betreffende sessie opengesteld worden.

Het voorbeeld dat ik beschrijf gaat om een tentamen in Blackboard, maar geldt ook voor Maple TA toets in Bb.

Ter voorbereiding op het tentamen dient de docent zijn tentamen (Assignment) middels Adaptive Release beschikbaar te zetten voor een of meerdere groepen. Voor iedere sessie wordt een toetsversie verbonden aan een (nog lege) groep. In het menu van Adaptive Release kan de docent kiezen of alle resultaten in dezelfde kolom in het grade center worden gezet. Dit voorkomt versnippering van de resultaten.

Daarnaast maakt de docent per sessie een ‘sign-up list’ aan in Bb. Er kunnen meerdere lijsten per sessie worden aangemaakt, zodat de studenten zich bij meerdere surveillanten kunnen aanmelden. Let op: de Sign up list moet unavailable zijn voor studenten.

Bij binnenkomst in de tentamenzaal, meldt de student zich bij de surveillant. De surveillant vinkt de student aan op de sign-in lijst als zijnde aanwezig en controleert de collegekaart.

Bij aanvang van het tentamen worden de sign up lijsten gekoppeld aan de groep van de betreffende tentamensessie en alle aanwezigen hebben vervolgens toegang tot het tentamen.

Beveiligd toetsen met Blackboard

Zoals ik al eerder blogde kunnen we op de TU Delft in Blackboard een beveiliging instellen op een toets. Dit houdt in dat tijdens de toets geen andere applicaties opgestart kunnen worden. Het activeren van de Lockdown Browser (LDB ) is simpel en kan door de docent eenvoudig worden uitgevoerd.

Eind januari werd op IO opnieuw een tentamen afgenomen met gebruik van de LDB. Een tweetal hertentamensessies in de ochtend en een drietal tentamensessies in de middag, waarin twee deeltentamens werden afgenomen. De leerpunten van de vorige keer waren meegenomen:

- Bij elke computer lag een handleiding waarop vermeld stond hoe het tentamen gestart diende te worden.

- Het course menu en de ‘Assignment’-map waren vooraf opgeschoond, zodat het tentamen eenvoudig te vinden was binnen de course.

- 3 attempts waren ingesteld om te voorkomen dat wanneer een toets om een of andere reden werd afgebroken, de student een nieuwe versie kon starten, zonder te hoeven wachten op het verwijderen van zijn 1e poging door een van de surveillanten.

- Randomisatie van de vragen was uitgeschakeld.

Wachtwoorden

Bij de opzet van dit tentamen was gekozen om in de Bb-toets geen wachtwoord te zetten, omdat het prompten op twee wachtwoorden achter elkaar (eerst van de LDB en daarna van de Bb-toets) vorige keer wat verwarrend werkte. Tijdens de ochtendsessies bleek echter dat in dit geval (als er geen wachtwoord op de Bb-toets staat) de LDB ook niet vraagt om een wachtwoord. Daarop is voor de middagsessies alsnog een wachtwoord op de Bb-toets gezet.

Als het LDB wachtwoord is ingegeven door de student, vult Bb automatisch het Bb-toetswachtwoord in, zodat je dan alleen nog op ‘submit’ hoeft te drukken om de toets te openen.

Inloggen op computer

In de computerzalen kunnen studenten met hun eigen NetID inloggen op de pc. Het gebruik van hun eigen profiel leverde zo af en toe problemen op:

- Bij twee studenten werd het lettertype in de toets dusdanig klein dat de toets niet langer leesbaar was

- Bij een enkeling bleek de LDB niet in de programmalijst te staan

- Bij sommigen starten MSN of andere applicaties automatisch op. Deze moeten gesloten worden voordat de LDB start (LDB geeft dit zelf aan)

Verder hadden sommige studenten het probleem dat bij het verlaten van het eerste deeltentamen de programmabalk verdween. Om het tweede deeltentamen op te starten moest het systeem opnieuw starten (log off – log on). Dit leidde bij de studenten tot twijfel of het eerste deeltentamen wel opgeslagen was.

Om de omgeving beter controleerbaar te maken is het handiger om met functionele accounts te werken. In het ideale geval worden deze functionele accounts opgeschoond tussen de verschillende tentamensessies, maar daarvan is het de vraag of dat praktisch haalbaar is.

Beveiligd vs onbeveiligd

Door omstandigheden (LDB-client bleek niet in alle zalen geinstalleerd:oops:) heeft een groep studenten buiten de LDB om moeten werken. Hiervoor diende in Bb een aparte tentamensessie te worden aangemaakt, omdat buiten de LDB de Bb-toets niet kan worden gestart. In deze tentamensessies werd slechts 1 poging toegestaan. De bovengenoemde problemen bij deze groep bleken aanzienlijk minder dan in de ‘beveiligde’ groep. Slechts van 2 van de circa 100 studenten moesten de docent de toets opnieuw beschikbaar stellen, omdat hij was vastgelopen. In de ‘beveiligde’ groep waren aanzienlijk meer studenten die een herstart moesten maken.

Omdat de ‘beveiligde’ groep te zien kreeg dat 3 pogingen toestaan waren, dachten zij dat zij bij een ‘fail’-score het tentamen nogmaals mochten maken. Het inzetten van meerdere attempts is vanuit beheer oogpunt dus wel gewenst (de resultaten van de sessie die is afgebroken hoeven namelijk niet te worden verwijderd voordat een nieuwe sessie kan worden gestart), maar het is verwarrend voor de studenten.

Conclusie

Het is nog wel duidelijk dat het digitaal toetsen nog zeker zijn ‘zweethandjes’ moment heeft bij de start van iedere sessie. De computers in de standaard computerzalen zijn niet altijd ‘stabiel’ genoeg voor de tentamensetting. Een extra argument voor ‘dedicated’ tentamenzalen.